A paper investigating the relevance of (pre-calculated) features for 3D point cloud classification using deep learning was just published in the ISPRS Annals of Photogrammetry and Remote Sensing.

The study presents a non-end-to-end deep learning classifier for 3D point clouds using multiple sets of input features and compares it with an implementation of the state-of-the-art deep learning framework PointNet++. It is found that the classification accuracy improves by up to 33% when including normal vector features with multiple search radii and features related to spatial point distribution. The method achieves a mean Intersection over Union (mIoU) of 94%, outperforming PointNet++’s Multi Scale Grouping by up to 12%. The paper presents the importance of multiple search radii for different point cloud features for classification in an urban 3D point cloud scene acquired by terrestrial laser scanning.

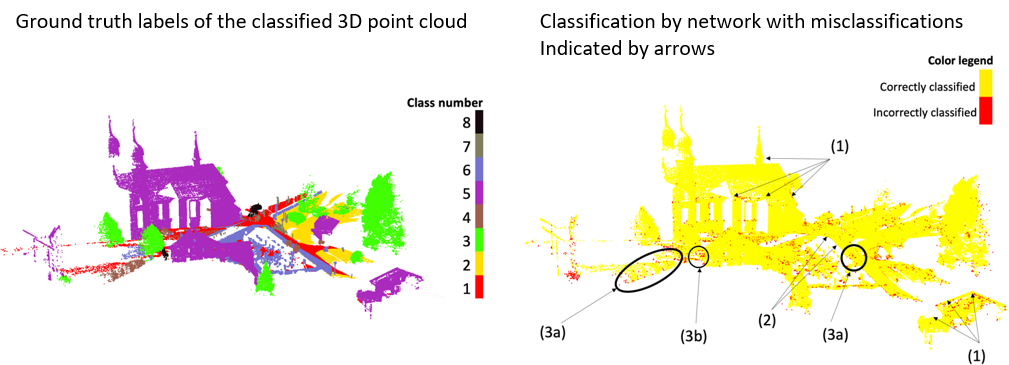

The study uses point clouds from the semantic3D dataset, a labelled 3D point cloud data set of geographic scenes. The figure below shows the labelled 3D point cloud from the paper as ground truth and a result of the deep learning classifier for one of the feature sets (cf. Kumar et al. 2019).

Wonder how misclassifications in the result can be explained? Find all the details in the paper:

Kumar, A., Anders, K., Winiwarter, L., and Höfle, B. (2019). FEATURE RELEVANCE ANALYSIS FOR 3D POINT CLOUD CLASSIFICATION USING DEEP LEARNING, ISPRS Ann. Photogramm. Remote Sens. Spatial Inf. Sci., IV-2/W5, 373-380, DOI: 10.5194/isprs-annals-IV-2-W5-373-2019

The research will be presented at the ISPRS Geospatial Week 2019 at University of Twente in Enschede (NL) in the Machine & Deep Learning session on Wednesday, 12th June 2019, 9:00 am – 10:30 am.

Will you be there?

The 3DGeo presents another research topic (Anders et al. 2019) from the Auto3Dscapes project in the Change Detection session.